Actionable Analytics the Ethical Way: Model Assessment Drives Transparency and Trust

How Rapidly Evolving AI-Powered Analytics Are Being Adopted to Support Student Success

The application of advanced Artificial Intelligence (AI) and Machine Learning (ML) algorithms to institutional student data offers powerful opportunities to enhance university support. By predicting academic challenges before they occur, institutions can proactively connect students with resources and advising, improving both individual outcomes and the broader educational environment. Predicting the needs of a new student cohort also helps institutions distribute resources more effectively. The result is a win-win: providing high-quality, targeted support while maintaining the financial and workforce sustainability of the institution.

This approach was succinctly expressed by Mark David Milliron, President and CEO of National University, during a panel on the future of data (see the key takeaway in: What's Next for Using Data to Support Students? The Chronicle for Higher Education, retrieved January 22, 2026):

We think about how to use the best of data science, design thinking, and domain expertise together to make a difference. You have to get clean, solid data—qualitative and quantitative, pulled together in the right way—and get it to the right people with the right tools and techniques.”

At Indiana University, this capacity has been under development for a decade. Through pioneering applications of advanced analytics and partnerships with campus leadership (see e.g., Fiorini et al. 2018, Rust and Motz 2025), we have made significant strides. Over the past two years, the Indianapolis campus has achieved powerful results by breaking down data silos, refining data science techniques, and fostering deep partnerships between advising units and domain experts (Day 2026).

As these tools become more sophisticated and pervasive (e.g., AIR Professional File Fall 2023 Volume), there is a growing recognition that a mindful, informed approach must guide the use of advanced analytics. Anyone trained in research knows that data and analytical tools have inherent limitations. In foundational statistical training, we are introduced to concepts such as specification errors and violations of model assumptions—like heteroscedasticity or multicollinearity.

Approaching ML and AI as if they are immune to these methodological limitations is naive. Similarly, we must constantly assess a model's power to represent reality accurately. Recent work (e.g., Ju 2023, Göçen and Asan 2023, Klopfer et al. 2024, Mowreader 2024) has sought to define the specific potentials and pitfalls of AI in education. Without this care, these analytics risk unintended consequences, such as failing to respond to the full array of student needs, misrepresenting the likelihood of certain challenges impacting different subgroups of students, or centering the main problems in students themselves rather than in institutional structures or practices that are not yet adequate to support them. This is why the Association for Institutional Research (AIR) emphasizes that data-informed practices have a profound impact on the lives of the students and communities we serve (Appel 2019).

Developing and Deploying High Quality, Ethical, and Actionable Analytics for Student Success

Through intentional, cross-functional collaboration, we developed an approach grounded in the following practices and principles:

- Inclusion and participation of those who will use the analytics from the outset, both to provide input on model development and to evaluate the quality and effectiveness of the models (Fiorini et al. 2018).

- The development of explainable AI, particularly through the application of Shapley variable importance plots and list of top features, to unpack the elements affecting an outcome and enhance the actionability of model predictions (Gopinath 2021, Murphy 2022).

- Bias Mitigation, the adoption of industry standards and tools to evaluate the presence and magnitude of model bias in predictions and implement corrections, if necessary (Bellamy et al. 2018, Prince 2019, Gándara et al. 2024).

- Implementation of assertive, student-centered engagement methods throughout the semester, including proactive advising (relationship-focused advising meetings held in the first half of the semester), strategic communication tied to discrete behavioral changes in students, and coordination with key academic centers to provision supports. This effort is analytically grounded through a cyclical refresh of predictive models at two-week intervals, monitoring of Canvas Activity Scores (CAS) (Rust and Motz 2025), and use of the Student Engagement Roster (SER)—an integrated system ensuring student needs are met at the course level (see Day 2026). The human in the middle ensures resilience in the system and guarantees that no student need is overlooked.

- Continuous improvement, by conducting rigorous impact assessments at the end of each semester to refine both models and interventions. This process identifies and improves synergies between students' support units as well as optimizes resource coordination and actions.

In this system, advising, academic, and student-life support are at the core. Analytics complement and deepen the quality and array of information that professionals can rely on. Analytics never replace professional judgement nor limit student access to available campus resources. We operate under the assumption that absence of a prediction does not exclude support needs. Analytics support the establishment of new semester improvement plans and targets.

Analytic and Model Quality Assessment

The advising predictive analytics we have developed consists of a three-phase analytics framework that enables proactive, continuous student support. This process starts before the semester begins, using pre-enrollment data such as academic preparation, orientation participation, financial aid information, course registration, and course-related academic demands to predict the likelihood of success for incoming students. These early insights allow advisors to take targeted action, tailoring outreach, and interventions to address specific barriers students may face.

Once the semester is underway, our approach incorporates real-time, biweekly updates to predictions using dynamic data streams such as Learning Management Systems (Canvas) activity data, engagement roster notifications (instructor feedback on student performance), course drops, and assessment performance. Building these timepoint models that account for changes in how students are engaging academically during the semester has been a particularly powerful tool in shaping faculty’s focus on how they can influence student success. For example, the analysis showed that engagement roster notifications submitted by the end of week 3 are highly predictive of end-of-semester grades. The campus practice had been to allow faculty to enter feedback at any time they wished, but our policy required reports by week 6. With this new knowledge, the provost set a new expectation of reporting in all lower-level courses by the start of week 4, ensuring that this actionable information was available on more students significantly earlier in the semester.

The biweekly updates generate prioritized lists of students needing support, empowering advisors to adapt their strategies as circumstances change. In addition to identifying priority students, we aggregate students’ changes in the probability of experiencing an adverse outcome at the program level, allowing us to evaluate collective risk patterns and the effectiveness of advising interventions. This helps leaders understand where entire programs or cohorts may need additional resources and enables advisors to refine interventions based on broader trends.

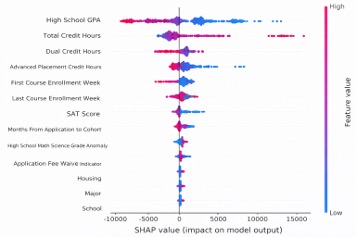

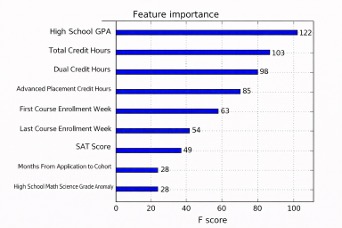

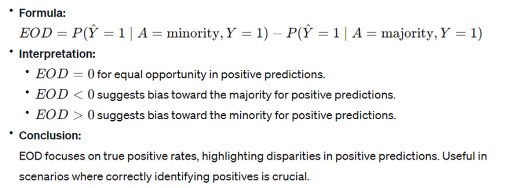

Because our goal is to improve institutional practice across the university, we are deeply committed to ensuring that the models we build are explainable, actionable, and unbiased. To achieve this, we employ techniques such as Shapley variable importance plots to unpack why a model generates its predictions and to identify the factors most strongly influencing outcomes (see AI generated example images in Figure 1 and Figure 2 below for Shapley Global Importance Plot, Feature Importance Plot). We also evaluate our models for bias using the Equal Opportunity Difference (EOD) metric, which measures differences in true positive rates between demographic groups. When disparities are identified, we apply corrective strategies to reduce bias and support fair, transparent decision-making. To be fair, the calculated metric for a ML model is ideally close to 0 (see Figure3 text insert for formula and explanation). In other words, the metric evaluates whether true positive rates are comparable across demographic groups.

Bias in ML refers to systematic errors that lead to inaccurate or unfair outcomes. It can stem from various sources, such as data collection processes, differential access to academic support and university resources, or the algorithms themselves. Bias can significantly impact the reliability, fairness, and ethical implementations of AI systems. Hence, assessing and removing model bias in ML is crucial for ensuring the equitable treatment of student needs, regardless of their background. This is particularly important in education applications, where biased models could exclude individuals from accessing needed resources. Thus, it is now practice at our institution to evaluate model quality and impact before deployment, ensuring that the factors examined in our models stay within thresholds where bias is minimized; if these are exceeded, we implement the necessary corrections. The results provide administration and advising/program staff with the needed transparency and accountability in model interpretation.

Figure 1. SHAP Summary Plot

This chart shows how different student characteristics influence the model’s predictions. Each row represents a feature, and the dots show whether that feature increases or decreases the predicted outcome. Features like high school GPA, total credit hours, and test scores have the strongest impact on the model.

Figure 2. Feature Importance

This chart shows which factors matter most to the model overall. High school GPA and total credit hours are the most important predictors, followed by other measures of coursework and enrollment timing. Larger bars indicate features that the model relies on more when making predictions.

Figure 3. Equal Opportunity Difference (EOD)

This figure explains the Equal Opportunity Difference metric, which compares how often the model correctly predicts positive outcomes for minority and majority groups. An EOD value close to zero indicates similar performance across groups, while positive or negative values suggest potential bias favoring one group over the other.

Conclusions

The integration of AI-powered analytics at institutions of higher education signifies a transformative shift toward a more proactive and impartial student success strategy. By synthesizing data science, design thinking, and domain expertise, higher education professionals can move beyond reactive support to a model that identifies student needs with precision and empathy. Our experience at the Indiana University Indianapolis’ campus demonstrates that when data silos are dismantled and replaced with deep, cross-functional partnerships, the results are powerful—benefiting both the individual learner and the long-term sustainability of the institution.

However, the pervasiveness of these tools demands a commitment to "actionable analytics in an ethical way." We reject the naive assumption that machine learning is immune to methodological error. Instead, we encourage the adoption of institutionalized transparency through explainable AI and rigorous bias mitigation. By employing metrics like the Equal Opportunity Difference (EOD), we can ensure that our models provide a fair chance of success for every student, regardless of their background.

Ultimately, the framework we suggest and have implemented is anchored by the "human in the middle." Analytics serve to complement, not replace, the professional judgment of advisors and faculty. By remaining dedicated to a cycle of continuous improvement, refining models through lived experience and impact assessments, we can ensure that higher education institutions are a place where every student is seen, supported, and empowered to graduate.

References:

Appel, M.S. (2019), A statement for a new era: The Association for Institutional Research Statement of Ethical Principles. New Directions for Institutional Research, 2019(183), 39–45. https://doi.org/10.1002/ir.20311

Bellamy, R. K. E., Dey, K., Hind, M., Hoffman, S. C., Houde, S., Kannan, K., Lohia, P., Martino, J., Mehta, S., Mojsilović, A., Nagar, S., Ramamurthy, K. N., Richards, J., Saha, D., Sattigeri, P., Singh, M., Varshney, K. R., & Zhang, Y. (2019). AI fairness 360: An extensible toolkit for detecting and mitigating algorithmic bias. IBM Journal of Research and Development, 63(4/5), 4:1–4:15. https://doi.org/10.1147/JRD.2019.2942287

Chu, W., Hosseinalipour, S., Tenorio, E., Cruz, L., Douglas, K., Lan, A., Brinton, C. (2023). Multi-layer personalized federated learning for mitigating biases in student predictive analytics. Journal of LaTeX Class Files, 14(8). [2212.02985] Multi-Layer Personalized Federated Learning for Mitigating Biases in Student Predictive Analytics (arxiv.org)

Day, J. (n.d.). Predictive analytics and holistic support guide student success strategy. IU News. Retrieved January 22, 2026, from https://news.iu.edu/in-undergrad/live/news/48249-predictive-analytics-and-holistic-support-guide

Fiorini, S., Sewell, A, Bumbalough, M., Chauhan, P., Shepard, L., Rehrey, G. and Groth, D. (2018). An application of participatory action research in advising-focused learning analytics. In Proceedings of the 8th International Conference on Learning Analytics and Knowledge (LAK ’18) (pp. 113–117). Association for Computing Machinery. https://doi.org/10.1145/3170358.3170387

Gándara, D., Anahideh, H., Ison, M. P., & Picchiarini, L. (2024). Inside the black box: Detecting and mitigating algorithmic bias across racialized groups in college student-success prediction. AERA Open, 10, 1–19.. https://doi.org/10.1177/23328584241258741

Göçen, A., & Asan, R. (2023). Generative artificial intelligence: Risks and benefits for educational institutions. Center for Open Science. https://doi.org/10.31219/osf.io/mvcb5.

Gopinath, D. (2021). The Shapley value for ML models. What is a Shapley value, and why is it crucial to so many explainability techniques? TowardsDataScience. https://towardsdatascience.com/the-shapley-value-for-ml-models-f1100bff78d1

Ju, Q. (2023). Experimental evidence on negative impact of generative AI on scientific learning outcomes. arXiv preprint arXiv:2311.05629.

Klopfer, E., Reich, J., Abelson, H., & Breazeal, C. (2024). Generative AI and K-12 education: An MIT perspective.

Kwon, Yongchan, and James Y. Zou. "Weightedshap: analyzing and improving shapley based feature attributions." Advances in Neural Information Processing Systems 35 (2022): 34363-34376. arxiv.org/pdf/2209.13429

Mowreader, Ashley. (2024). Report: Predictive models may have bias against Black and Hispanic learners. Inside Higher Ed. Predictive models in higher ed disadvantage some students (insidehighered.com)

Murphy, A. (2022). Shapley values – A gentle introduction. H20.ai Blog. https://h2o.ai/blog/shapley-values-a-gentle-introduction/

Prince, S. (2019). Tutorial #1: bias and fairness in AI. Borealis AI. Tutorial #1: bias and fairness in AI - RBC Borealis

Rust, M. M., & Motz, B. A. (2025). Incorporating an LMS learning analytic into proactive advising: Validity and use in a randomized experiment. The Internet and Higher Education, 101057.

Codebase of ’Shap’ GitHub - shap/shap: A game theoretic approach to explain the output of any machine learning model.

AI Fairness 360 toolkit - GitHub - Trusted-AI/AIF360: A comprehensive set of fairness metrics for datasets and machine learning models, explanations for these metrics, and algorithms to mitigate bias in datasets and models.

Article - Explore Fairness Metrics for Credit Scoring Model - MATLAB & Simulink (mathworks.com)

Package ‘SHAPforxgboost’. (2023). https://cran.rproject.org/web/packages/SHAPforxgboost/SHAPforxgboost.pdf

Package ‘Shap’ shap · PyPI

About the authors:

Stefano Fiorini Ph.D. is a Social and Cultural Anthropologist with the Research and Analytics team, a subunit within Indiana University’s Institutional Analytics office. He has extensive applied research experience in the areas of institutional research and learning analytics. He has published in peer reviewed journals and conference proceedings, presented at national and international conferences (e.g. AIR Annual Forum, CSRDE, LAK, LAP), earning best paper awards from INAIR, AIR and SoLAR, as well as served as chair of projects’ working groups, editorial boards and conference organizations.

Rishika Samala has 9 years of experience working in Data Science and Research with a master's in data science. She currently serves as a data scientist with the Research and Analytics team, a subunit within Indiana University’s Institutional Analytics office. She has extensive work expertise in machine learning, deep learning, recommendations, and predictive analytics from various domains including automotive, health research and manufacturing.

Tradara McLaurine, Senior Executive Director of Campus Career & Advising Services at IU Indianapolis, is a three-time alumna of Indiana State University with two bachelor’s degrees one in accounting, one in legal studies and her master’s degree in student Affairs and Higher Education and her certification in Women’s Entrepreneurship from eCornell. She has over 15 years’ experience working in higher education in the areas of career advising, academic advising, student conduct, conferences and events, programming, teaching, and residential life. Tradara currently holds certifications as a Certified Diversity Professional from the Institute of Diversity Certification, Certified Trainer for Understanding and Engaging Under-Resourced College Students from Bridges Out of Poverty, Certified Predictive Index Practitioner and Certified Wellness & Health Coach.

Gina Deom has more than a decade of experience working in higher education data and research. She currently serves as the Team Lead of the Institutional Reporting Development unit within the Office of Institutional Analytics. Gina has given several presentations at national and international conferences, including the SHEEO Higher Education Policy Conference, the NCES STATS-DC Data Conference, the Learning Analytics and Knowledge (LAK) Conference, and the AIR Forum. Gina has earned a best paper award from INAIR, AIR, and LAK.

Ram Marupudi has 7 years of experience working in the field of Data Science and Analysis with master's in data science degree. He currently serves as Data Scientist with the Research and Analytics team, a subunit within Indiana University’s Institutional Analytics office. He has extensive experience in Machine Learning Operations and Predictive analytics in verticals like ERI, FSI and Higher Education.

Christina Downey Ph.D. serves as Vice Provost for Undergraduate Education at Indiana University Indianapolis. A clinical psychologist by training, having completed her doctorate at the University of Michigan at Ann Arbor, Dr. Downey began her academic career at one of Indiana University’s regional campuses as assistant professor and eventually achieved the position of Interim Executive Vice Chancellor for Academic Affairs. Since joining IU Indianapolis, she has been focused on holistic academic support and engagement of students to promote retention and graduation. She has published and presented on a variety of topics, including multicultural mental health and disorder, positive psychology, and leadership and change management.