AIR launched a community survey on February 5, 2026, to gather insight into institutional experiences with the IPEDS Admissions and College Transparency Supplement (ACTS). A total of 390 institutional research and data professionals from U.S. colleges and universities responded, sharing perspectives on institutional readiness, reporting capacity, and implementation challenges. Of respondents, 63% represent private, not-for-profit institutions, 36% represent public institutions, and 1% represent for-profit institutions.

Current Status of ACTS Work

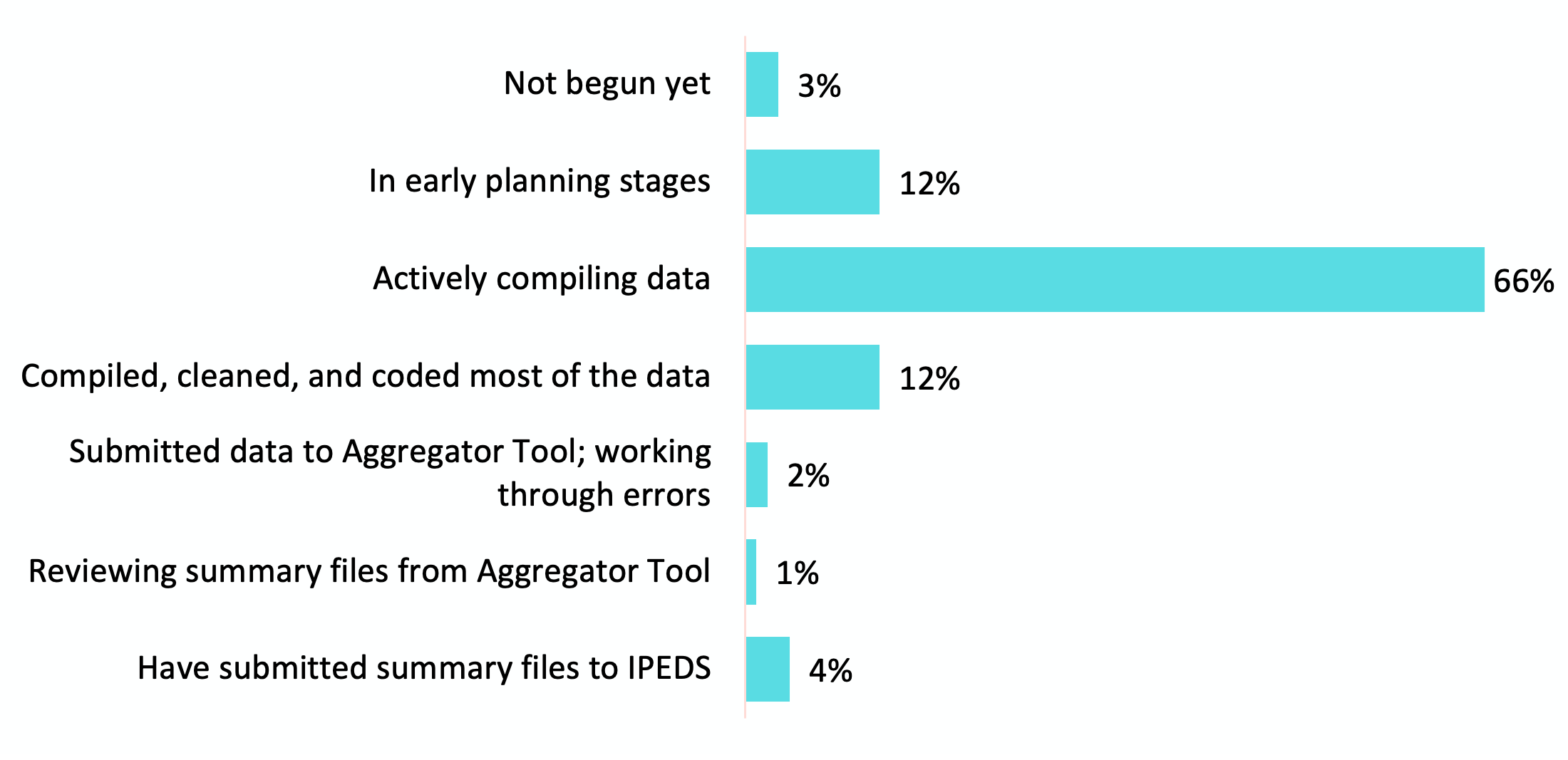

Survey responses indicate that, as of early February 2026, 81% of institutions were still in the planning stages or actively working through the core data of ACTS reporting, with few having reached final submission stages (Chart 1).

Chart 1. Progression of ACTS Reporting: Percentage of Respondents

When asked about their level of confidence in submitting ACTS data accurately by the March 18 deadline, responses indicate substantial concern across institutions. Most respondents (60%) reported being very or somewhat concerned about their institution’s ability to submit accurate data by the deadline, reflecting widespread uncertainty regarding data readiness, interpretation of definitions, and the time required to complete quality control processes. An additional 11% reported being neutral or unsure. Taken together, more than seven in ten institutions were either concerned or uncertain at the time of the survey. The remaining 29% reported being very or somewhat confident in their ability to submit accurate ACTS data by the deadline.

These findings suggest that, as of early February 2026, institutional confidence lagged reporting expectations. With a majority expressing concern, and fewer than one-third expressing confidence, the data underscore the pressure institutions are experiencing as they work to meet the ACTS deadline while ensuring accuracy and compliance.

Open-ended responses suggest that institutional progress is constrained primarily by compressed timelines, retroactive data requirements, and concurrent IPEDS reporting obligations. Many respondents emphasized that compiling seven years of historical data, while managing other required submissions, has significantly slowed progress and reduced confidence in meeting the March 18 deadline.

Selected comments below reflect recurring themes across responses:

- “A 90-day turnaround is not reasonable to expect colleges and universities to be able to compile seven years' worth of data to meet requirements which were not being discussed twelve months ago.”

- “Building a single year of data is enough of a challenge… Asking for 7 years of data all at once is an extremely tall task.”

- “Asking for accurate information with a three-month window alongside the normal IPEDS submissions deadlines, is not possible.”

- “This is by far the most difficult and time-consuming project I have ever worked on.”

- “It is taxing staff and requiring after-hours work to complete this level of requirement.”

- “This collection is consuming a substantial share of our available capacity. The retroactive requirements are effectively displacing other critical duties.”

Challenges and Barriers

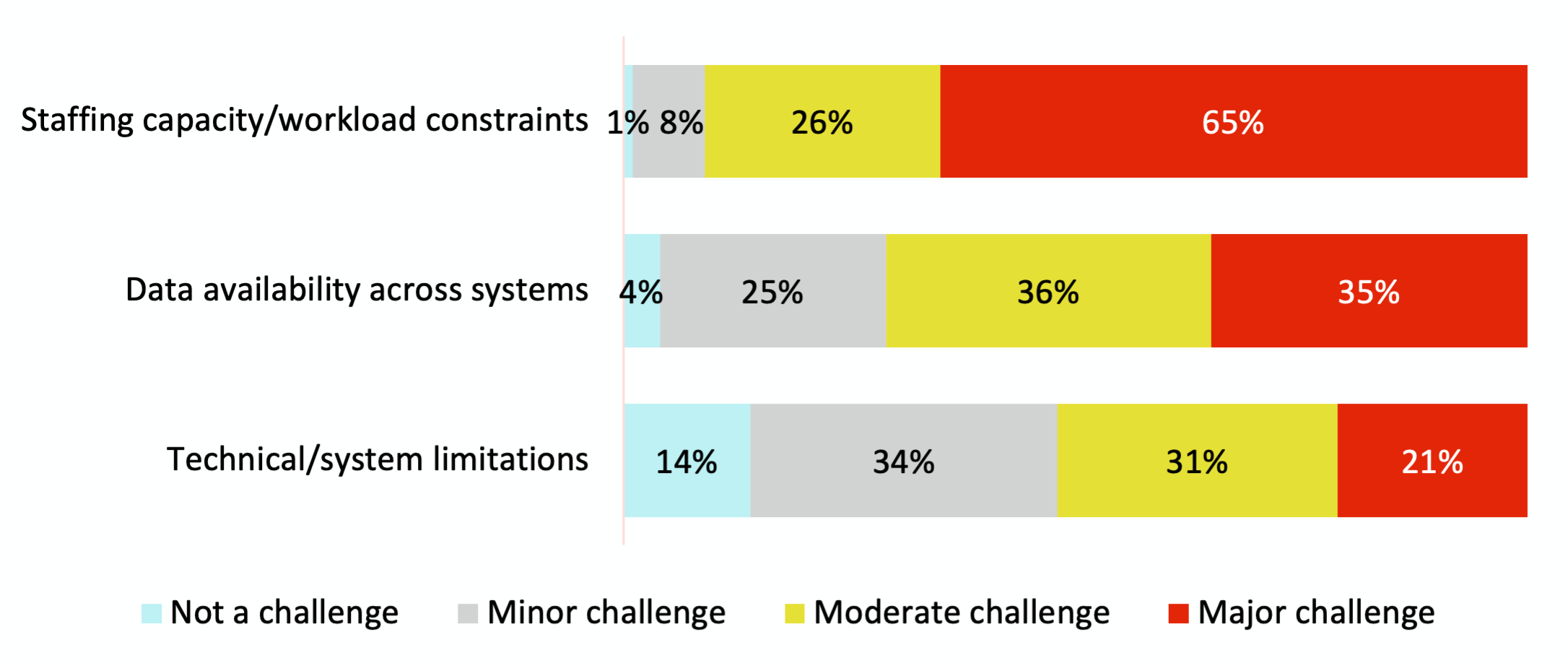

Findings from Chart 2 indicate that institutions are experiencing ACTS reporting primarily as a resource intensive effort, with challenges concentrated in staffing capacity and the coordination of data across systems.

Staffing and workload pressures are not peripheral concerns; they are central to the implementation experience. Most respondents (91%) report that staff capacity is a moderate or major challenge, suggesting that ACTS reporting is competing directly with other core institutional responsibilities.

At the same time, institutions report widespread difficulty managing data across multiple systems. The need to locate, reconcile, and integrate data from different functional areas adds complexity and increases the time required to prepare submissions.

Taken together, the data suggests that ACTS implementation is constrained by limited staffing capacity and the operational complexity of coordinating data across systems. These capacity factors appear to be foundational drivers of institutions’ overall reporting experience.

Chart 2. Structural and Capacity Constraints

To better understand the structural and capacity constraints reflected in Chart 2, respondents were invited to describe their experiences in their own words. The comments below illustrate how staffing limitations and decentralized data systems are shaping institutions’ ability to compile and submit ACTS data.

Across responses, professionals emphasized the strain placed on small IR offices, the difficulty of managing reporting alongside existing responsibilities, and the complexity of working across multiple, non-integrated systems, particularly when reconstructing several years of historical data.

- “I’m a one-person IR and Assessment office and have no margin in my workload.”

- “For small offices (we're 2 FTE at a small college), this is a lot of additional work and it's hard to adjust to fit it in an already busy time of year.”

- “Given all the cuts to education, staffing is at its most stretched thin. I simply do not have the FTE for a project this large”

- “At our institution, the data comes from four distinct systems that do not talk to one another.”

- “Enterprise systems are designed to run a university, not to classify students and transactions into the very specific categories ACTS requires.”

- “Data systems change over time, and asking for 7 years of back data makes this a more difficult task.”

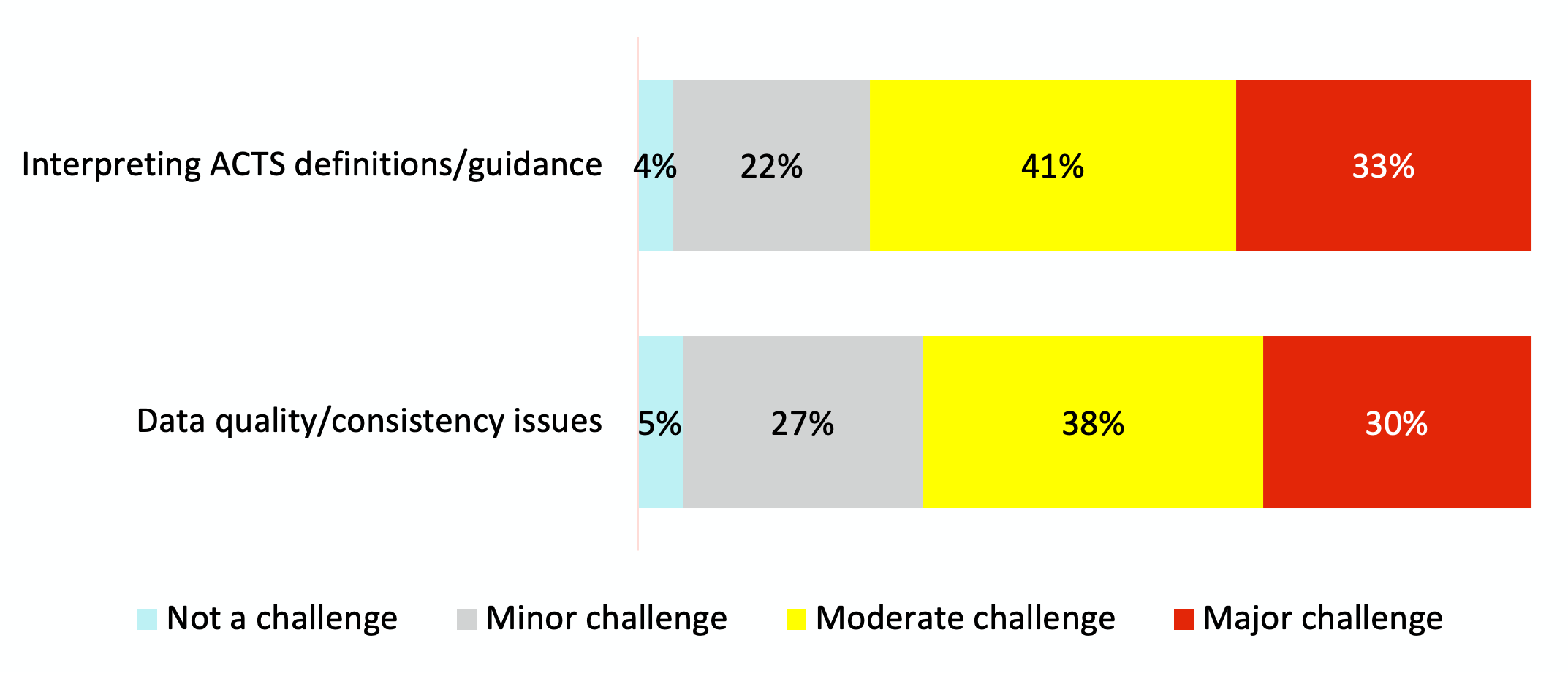

Survey results also indicate that institutions are facing challenges related to interpreting ACTS requirements and ensuring data consistency. Across both areas, most respondents reported experiencing these issues at a moderate or major level (Chart 3).

The results suggest that ACTS implementation is not only a matter of staff time and data extraction, but also one of interpretation. Institutions are navigating complex definitions, evolving guidance, and the need to reconcile ACTS requirements with existing reporting practices. Ensuring internal data consistency, particularly when working across systems or reconstructing historical information, adds another layer of difficulty.

Together, these findings indicate that institutions are operating in a reporting context that requires ongoing interpretation and adjustment. Institutions are not simply compiling data; they are working to interpret, align, and validate information as guidance and definitions are clarified. These interpretive demands compound structural capacity constraints and contribute to concerns about reporting accuracy and comparability.

Chart 3. Definitional Ambiguity and Process Instability

To further illustrate the challenges reflected in Chart 3, respondents described their experiences navigating evolving guidance, adjusted definitions, and multiple updates in technical processes. Many noted that differences in interpretation, template revisions during the collection window, and questions about quality control procedures have made it difficult to move forward with confidence. The comments below highlight how these ongoing adjustments are affecting institutions’ ability to compile, validate, and submit ACTS data accurately.

- “It’s difficult to move quickly when data definitions are unclear, the aggregator tool behaves unpredictably, or we find that other schools have received different guidance from the IPEDS help desk.”

- “IPEDS is providing conflicting responses to so many of the questions.”

- “We are 5 weeks from the due date, and the QC Review process is not online AND we do not know what the QC Review is using as a comparison.”

- “Definitions and guidance coming directly from the Help Desk keep changing.”

- “Changing coding in the templates in the midst of data collection is a major problem.”

- “I wish that more testing, with more varied schools, was conducted before implementing ACTS.”

Beyond implementation and capacity challenges, respondents raised broader concerns about data quality, integrity, and interpretation. Several noted that compiling data under compressed timelines, varied system structures, and evolving definitions may affect consistency and comparability across institutions at a national level.

In addition, some respondents expressed concern about the submission and future use of individual-level data. These concerns focused on privacy and identifiability, particularly for smaller institutions, as well as on questions about how the data may be interpreted or used once released.

Selected comments below reflect recurring themes across responses:

- “I seriously question the reliability and usability of the data that ED will receive in aggregate.”

- “Differences in data systems and institutional definitions means there will be huge variability in the data collected.”

- “Collection of historical data announced after-the-fact will be subject to significant data quality problems.”

- “I am uncomfortable with a process that requires uploading individual-level student data… Even aggregated data identify many of our students individually.”

- “I’m concerned with what they are going to do with these indexed summaries.”

- “I have serious doubts that the data we submit will be used responsibly.”

Summary

Findings from AIR’s February 2026 survey indicate that institutions are actively engaged in ACTS reporting but are experiencing considerable strain in doing so. At the time of the survey, most respondents were still in the data compilation phase, and a majority expressed concern about their ability to submit accurate data by the March 18 deadline.

Quantitative and qualitative findings consistently point to three central factors shaping the reporting experience: staffing capacity constraints, the complexity of managing data across multiple systems, and challenges interpreting evolving definitions and guidance. Institutions describe ACTS not as a routine compliance task, but as a resource-intensive reporting effort layered onto existing reporting responsibilities.

In addition to structural and process challenges, respondents raised broader concerns about data quality, comparability across institutions, and the future interpretation or use of submitted data. These concerns reflect uncertainty about whether data compiled under compressed timelines and varying system architectures will affect consistency at a national level.

Taken together, the findings suggest that institutional readiness for ACTS is driven primarily by structural capacity realities, implementation timing, and definitional clarity. Addressing these factors will be central to supporting accurate and sustainable reporting moving forward.

Suggested Citation

Jones, D., and Keller, C. (2026). ACTS Progress and Barriers Survey Report. Brief. Association for Institutional Research. http://www.airweb.org/community-surveys.

About

AIR conducts surveys community surveys on a variety of topics to gather in-the-moment understanding from data professionals working in higher education.

Looking for more information about ACTS?

Image Descriptions

Chart 1

| Status | Percent of Total |

| Have submitted summary files to IPEDS | 4% |

| Reviewing summary files from Aggregator Tool | 1% |

| Submitted data to Aggregator Tool; working through errors | 2% |

| Compiled, cleaned, and coded most of the data | 12% |

| Actively compiling data | 66% |

| In early planning stages | 12% |

| Not begun yet | 3% |

Chart 2

| Potential Challenge | Not a challenge | Minor challenge | Moderate challenge | Major challenge |

| Technical/system limitations | 14% | 34% | 31% | 21% |

| Data quality/consistency issues | 5% | 27% | 38% | 30% |

| Interpreting ACTS definitions/guidance | 4% | 22% | 41% | 33% |

| Data availability across systems | 4% | 25% | 36% | 35% |

| Staffing capacity/workload constraints | 1% | 8% | 26% | 65% |

Chart 3.

| Chart 2. Structural and Capacity Constraints | Not a challenge | Minor challenge | Moderate challenge | Major challenge |

| Technical/system limitations | 14% | 34% | 31% | 21% |

| Data availability across systems | 4% | 25% | 36% | 35% |

| Staffing capacity/workload constraints | 1% | 8% | 26% | 65% |